Before this project, my experience with Go was pretty limited. I’d written a few small Lambda functions and image utilities, but nothing that really tested Go’s concurrency model or scalability.

Lately I’ve been dipping my toes into the message queue ecosystem using RabbitMQ, BullMQ and SQS. I’ve always loved RabbitMQ. It’s been reliable, battle-tested, and I’ve used it in multiple projects — including Drivnbye — where it powers webhook handling, image processing, billing, and email/push notifications. It’s great for most async workloads, but it doesn’t natively support scheduled messages that might need to sit idle for hours or even weeks before delivery.

After some investigation I didn’t find to many options of a message queue that supported long-standing scheduled messages.

That gap got me thinking: could I build a lightweight, simple, and scalable scheduled message queue that does one job and does it extremely well?

SMQ was born from that idea. It wasn’t built because I needed it right away — it was built because I wanted to push my understanding of Go, concurrency, multi-region systems, and resilient architecture to a new level.

Goals

My goal for SMQ was simple:

Build a highly scalable, lightweight, customizable, and simple scheduled message queue that does one job and does it amazingly.

Along the way, I wanted to explore:

- Go routines and concurrency coordination

- Database modeling for distributed systems using cockroachDB

- Resilience through WAL (Write-Ahead Log) for the buffer layer

- Multi-region scheduling and failover

- Real-world performance and bottlenecks

System Overview

graph TB

subgraph "External Clients"

P[Producers]

C[Consumers]

M[Monitoring]

end

subgraph "SMQ Application Nodes"

subgraph "Producer Service"

PA[Producer API<br/>POST /v1/message<br/>DELETE /v1/message/:id<br/><i>Single Instance</i>]

end

subgraph "Consumer Service"

CA[Consumer API<br/>GET /v1/channels/:channel/poll<br/>POST /v1/messages/ack<br/>POST /v1/messages/nack<br/><i>Single Instance</i>]

end

subgraph "Background Processes"

S[Scheduler Process<br/>Marks messages as 'ready'<br/><i>Multiple Instances</i>]

J[Janitor Process<br/>Handles stale messages<br/><i>Multiple Instances</i>]

B[Buffer Workers<br/>Memory or disk/WAL<br/>Batch write to DB<br/><i>Multiple Workers</i>]

end

subgraph "Health Service"

H[Health API<br/>GET /v1/health<br/>Monitors all services<br/><i>Single Instance</i>]

end

end

subgraph "Data Layer"

DB[(Database<br/>Postgres / CockroachDB)]

end

%% Producer flow

P -->|Create/Delete Message| PA

PA -->|Write to Buffer| B

B -->|Batch Insert| DB

%% Consumer flow

C -->|Poll/Ack/Nack| CA

CA -->|SELECT FOR UPDATE<br/>SKIP LOCKED| DB

%% Scheduler flow

S -->|UPDATE messages<br/>to 'ready'| DB

%% Janitor flow

J -->|Cleanup stale<br/>messages & nodes| DB

%% Health monitoring flow

M -->|Check Health| H

H -.->|Monitor| PA

H -.->|Monitor| CA

H -.->|Monitor| S

H -.->|Monitor| J

H -.->|Monitor| B

H -->|Store Health<br/>Metadata| DB

style DB fill:#e1f5ff

style PA fill:#ffe1e1

style CA fill:#e1ffe1

style S fill:#fff5e1

style J fill:#fff5e1

style B fill:#ffe1ff

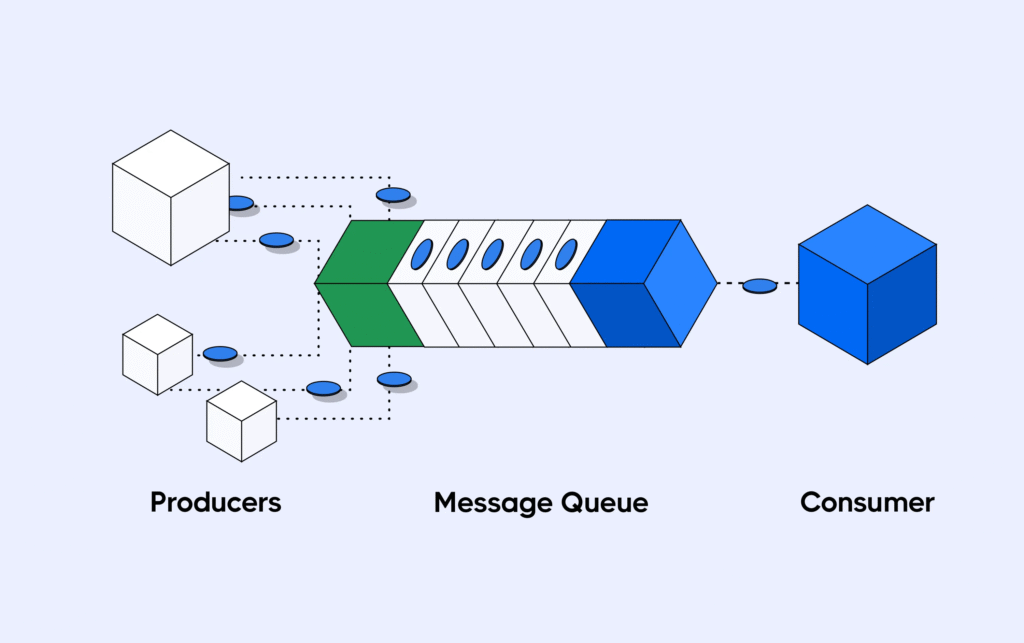

style H fill:#f0f0f0SMQ is broken into several independent layers within the SMQ node, each focused on a single responsibility. Together, they form a distributed, multi-layer queue system that can scale horizontally while remaining simple to reason about.

Producer Layer

A REST API that lets clients create or delete messages. Producers define when a message should be delivered and what channel it belongs to. It’s intentionally minimal and stateless.

Buffer Layer

Messages first land in a buffer, which can be configured as:

- In-memory (high performance, low durability)

- WAL (slightly slower, but resilient on crash)

The buffer is flushed to the database on an interval that is configurable. WAL mode ensures that even if the process crashes mid-write, messages aren’t lost.

Scheduler Layer

The scheduler continuously scans the database for messages that are ready to deliver. It marks these messages as READY by acquiring short-lived leases that prevent other schedulers from processing the same messages.

This approach avoids the need for complex database sharding while keeping multi-region clusters consistent and predictable.

Consumer Layer

Consumers poll the system for messages, acknowledge them when processed (ack), or requeue them if something goes wrong (nack) with built in support for DLQs (dead letter queues) or discarding a message after X retries if it has not been acked.

Consumers never communicate directly with the scheduler — instead, they pull from the database where the scheduler updates, keeping system responsibilities clean and decoupled.

Janitor Layer

This background process handles cleanup, retries, and node monitoring. If a node fails to deliver or loses connection mid-job, the janitor identifies and recycles those messages back to READY status for future delivery.

This layer also handles identifying, logging and discarding stale nodes where it appears they have gone offline

Health Layer

A lightweight REST endpoint that exposes metrics and health checks across all layers. It ensures the system can be monitored easily, and it helps detect bottlenecks or unhealthy nodes. It also monitors each layer’s health to report.

Region-Aware Janitor and Scheduler Logic

One of the more interesting challenges in a multi-region CockroachDB deployment was preventing cross-region cleanup or message acquisition conflicts. To solve this, I made both the scheduler and janitor (optionally) region-aware.

For example, the janitor can be region-specific — it only marks stale ACQUIRED messages as READY within its own region. This prevents multiple regions from trying to recover or process the same messages simultaneously.

Similarly, the message acquisition logic has both local and non-local modes. The consumer layer first attempts to acquire messages from its local region using the crdb_region = gateway_region() filter, then (optionally) queries other regions if there’s available capacity. This ensures that latency-sensitive delivery stays local while still maintaining global redundancy.

Databases and Multi-Region Setup

SMQ currently supports CockroachDB and PostgreSQL, with Cockroach being the focus because of its built-in distributed nature.

Each node is aware of its datacenter or region. The multi-region layer is purely metadata, not sharding — schedulers acquire messages independently, and database-level locks ensure only one scheduler owns a message at a time.

If a scheduler fails, leases expire and other schedulers can safely pick up those messages. This makes the system highly resilient to node failures or regional outages without requiring complex partitioning logic.

CockroachDB’s REGIONAL BY ROW feature powers much of the multi-region logic. Each message row is automatically stored in the same region as the SQL gateway that inserted it. This allows the consumer layer to query locally-owned messages first — improving latency and throughput — while still being able to fail over globally if needed. The system also uses region filters like crdb_region = gateway_region() to enforce local-only operations where appropriate.

Race Conditions and Coordination

Early versions of SMQ ran into the classic distributed-system problem: schedulers in different regions trying to acquire the same messages.

The solution was to:

- Introduce short-term database leases during message acquisition.

- Use status transitions (

PENDING → ACQUIRED → READY) enforced by the database. - Keep the scheduler’s logic simple and deterministic.

By offloading concurrency control to the database rather than trying to coordinate across nodes directly, we avoided a whole class of race condition issues while keeping the architecture clean.

To prevent race conditions in a distributed setup, message acquisition uses CockroachDB’s FOR UPDATE SKIP LOCKED clause inside a transaction. Combined with region-based filtering, this ensures only one node in a specific region can acquire a message at a time — while other regions remain unaffected. The result is deterministic, atomic message claiming that scales safely across regions.

Design Choices and Tradeoffs

Go and Routines

Go’s lightweight concurrency model made it easy to structure the system as independent loops:

- One loop for producing messages

- One for consuming

- One for scheduling

- One for janitor cleanup

Each layer runs as its own routine, which made it natural to think in terms of “mini-services” running inside the same process.

WAL and Buffering

Adding a Write-Ahead Log for the buffer layer was a major learning experience. It dramatically improved reliability by decoupling in-memory performance from persistence along with allowing the system to recover when a node crashes

The tradeoff is a small delay during flush intervals, but the durability benefits far outweigh it.

Eventually, I’d like to add Redis as an optional buffer option — allowing the system to combine WAL durability with Redis’ low-latency access patterns.

CockroachDB

CockroachDB’s distributed SQL model fits SMQ perfectly. Its strong consistency guarantees, automatic replication, and region-awareness simplified a lot of the coordination logic I’d otherwise have to handle manually.

Performance

Even on minimal infrastructure (a free CockroachDB instance and the cheapest DigitalOcean droplet), SMQ performs impressively well:

- Health check (GET): ~1ms

- Create message (POST): ~7ms

- Poll message (GET): ~12ms

These numbers come from real-world deployment tests and demonstrate that the architecture can scale even with modest resources.

Lessons Learned

This project taught me more about distributed systems than any tutorial could:

- Go routines are powerful, but coordinating them safely requires a lot of pre-planning.

- WAL and buffering layers demand careful thought around durability vs. latency.

- Multi-region systems are less about clever code and more about predictable coordination.

- Observability (the health layer, janitor logs) is what turns a this project into resilient.

I also learned to appreciate the simplicity of letting the database handle the hard problems — from locking to consistency — instead of re-inventing that logic in Go.

What’s Next

There’s still some room to grow. I plan to:

- Add Redis as a caching layer for the scheduler

- Use Redis for buffering high-throughput workloads

- More investigation in performance under load

While SMQ started as a learning project, it’s shaping up to be a real tool I could see powering scheduled notifications or marketing messages for apps like Drivnbye.

Leave a Reply